AI vision systems vs. physics:

How object geometry and material properties determine inspection success

Why physics still limits AI in machine vision

Today we focus on the question of how strongly the geometric and optical properties of the inspected object itself determine the design of a machine vision system – in other words, the substrate on which the features appear.

In practice, this is often where it is decided whether an inspection system will work robustly or fail due to basic physics.

How object geometry impacts machine vision performance

Geometry of the substrate: flat, ribbed, curved

The geometry of the inspected object plays a central role and directly affects how light interacts with the surface and how stable features appear:

- Flat, planar surfaces can usually be imaged comparatively easily with classic setup variants (coaxial, dome, area lighting).

- Structured, ribbed, textured, or milled surfaces generate strong intensity variations depending on illumination direction, which can mask or mimic defect contrast.

- Curved or strongly 3D‑shaped parts lead to highly variable incidence and reflection angles, causing local overexposure and shadowing.

This has direct consequences for camera and illumination placement:

Best lighting strategies for different surface geometries

- For planar surfaces, the optical axis can often be oriented almost perpendicular to the object; illumination is then chosen to emphasize specific reflective or diffuse components (e.g. coaxial or dome lighting).

- For ribbed structures, a combination of several illumination directions is often beneficial to minimize directional effects; alternatively, a deliberately chosen oblique illumination can highlight certain edges while suppressing others.

- For curved parts, a single point‑like light source is almost always problematic. Large, preferably enclosing sources (domes, large‑area lights, large‑diameter ring lights) or multiple/moving illumination positions are usually more robust.

Especially for AI applications, the setup should avoid pure shape‑driven artifacts (e.g. hotspots on curved regions) being learned as defects. A geometry‑aware illumination concept is therefore essential.

Optical properties: mate vs. reflective vs. transparent surfaces

Beyond geometry, material properties of the substrate determine how light is handled:

- Matte, diffusely reflecting surfaces scatter light largely independent of incidence angle; contrast mainly arises from differences in reflectance.

- Reflective, glossy surfaces follow the law of reflection more closely; small angular changes can cause large intensity differences.

- Transparent or translucent materials add transmission, refraction, and volume scattering to the mix.

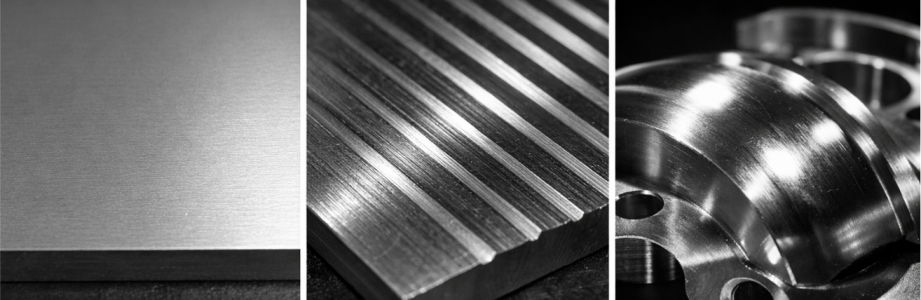

Glossy surface

Structured surface

Matte surface

Choosing the right illumination for each material type

- Matte surfaces: The goal is a homogeneous, stable illumination with high signal‑to‑noise ratio. Area lights, diffusers, and, if needed, polarization filters on both light and lens help reduce residual glare.

- Glossy surfaces: It must be decided explicitly whether the specular component is to be used or suppressed. Coaxial illumination is suitable for rendering planar, mirror‑like surfaces uniformly bright while defects (scratches, residues) generate contrast. Low‑angle or darkfield lighting, by contrast, emphasizes edges, scratches, and topographic defects.

- Transparent/translucent surfaces: Backlight setups are often useful to highlight edges and inhomogeneities in transmission. For surface defects on transparent parts, oblique or coaxial illumination, again often combined with polarization, helps control disturbing reflections from the environment.

Camera placement is tightly coupled to these properties: If the specular reflection is intentionally exploited, the camera should be placed in the reflection direction; if the reflection is undesirable, the optics are deliberately rotated out of these angles.

Handling real-world variability: Rust, aging, and environmental effects

Scenarios where the substrate itself varies strongly are particularly challenging – either locally within one part or globally between parts. Typical examples include:

- Rust on metal parts stored outdoors

- Weather‑aged railway sleepers/ties

- Surfaces altered by dirt, moisture, or biological growth

In these cases, geometric and optical changes overlap, and a perfect reference surface no longer exists.

- Geometric changes (flaking, surface roughening, wear)

- Optical changes (color shifts, modified roughness and thus changed reflectance)

- Partial, strong heterogeneity both within one sample and across different samples

Why lighting consistency is critical for AI models

For the illumation design this implies:

- Ideal “reference images” with perfect homogeneity are practically unattainable. The setup must instead be made as robust as possible against these variations.

- Large, diffuse light sources (domes, large area panels) help to avoid local extreme reflections and average out microscopic roughness.

- A carefully chosen incidence angle can ensure that rough, corroded structures remain visible but do not cause every rust spot to dominate the image when the actual focus is on other defect types (e.g. cracks or structural damage).

Temporal stability also matters:

- Outdoor systems are subject to changing ambient light, dirt, and moisture.

- Shielding from ambient light, robust, enclosed lighting assemblies, and regular recalibration (potentially using reference targets) are essential.

AI models benefit greatly if the lighting setup captures the normal variability of the substrate consistently, enabling true anomalies to be detected reliably despite strongly changing surface conditions.

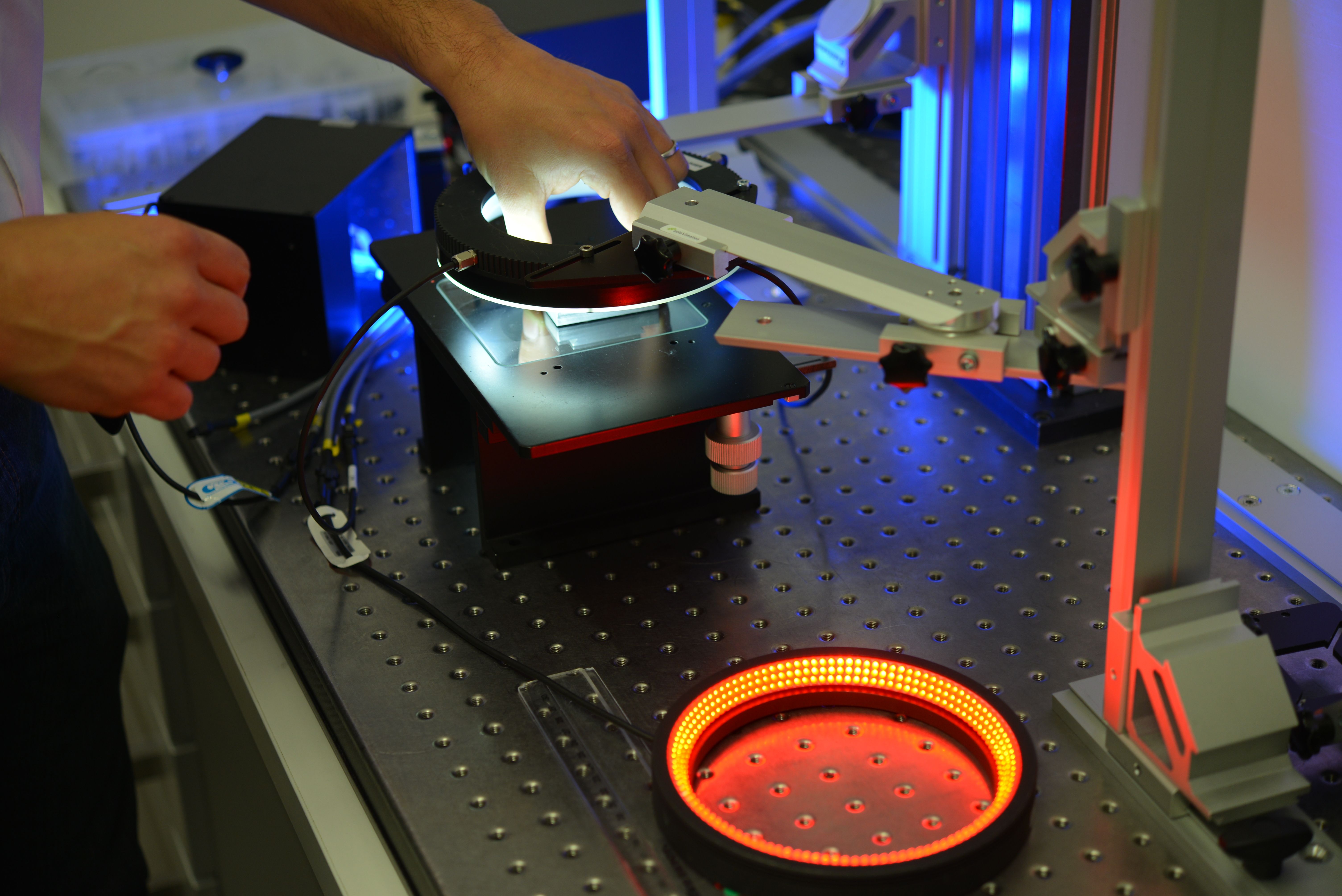

Camera and illumination placement: The key design decision

All these aspects converge in one key design decision: where camera and lighting are placed in 3D space. Depending on the surface and defect type, different configurations are required

- On‑axis vs. off‑axis: Is light coupled along the optical axis (coaxial), or are tilted light sources used?

- Ring, line, area, or dome illumination: Should the light be strongly directional or broadly distributed?

- Static vs. sequential setups: Is one illumination configuration per acquisition sufficient, or are several lighting variants needed in sequence to reveal different defect types and surface effects?

Multi-illumination techniques for robust AI inspection - better data for better decisions

For strongly variable or complex surfaces, it is often useful to

- acquire the scene with multiple, differently oriented light sources,

- generate multiple images with different illumination setups (for example, a combination of darkfield, coaxial, and dome illumination),

- fuse these images into a single combined “illumination image” where appropriate (e.g. channel‑wise, using max/min operations or weighted averaging), and

only then feed this derived, multi‑illumination image into the AI model for evaluation

This makes the inspection less sensitive to local outliers (hotspots, shadows) and helps the evaluation – whether classical or AI‑based – to better separate intensity patterns that correspond to relevant features from those caused purely by material and illumination effects.

Why understanding physics is essential for AI vision

A careful analysis of the geometry and optical properties of the substrate is not a theoretical luxury,

but the foundation of every robust vision solution.Skipping this step and “simply selecting a camera” risks preventing both

classical algorithms and AI models from ever reaching their full potential.

FAQ – Machine vision, AI inspection & illumination

In many cases, the issue is not the model but the image quality. If lighting, geometry, and material properties are not properly controlled, the system learns unstable or irrelevant features. Even the best AI cannot compensate for poor physical conditions.

There is no universal solution. The optimal lighting depends on the object’s geometry and material. Flat surfaces often work well with coaxial or diffuse lighting, while structured or curved parts usually require multi-directional or large-area illumination.

Because small changes in angle can cause large variations in reflected light intensity. This leads to glare, hotspots, and unstable contrast, which can confuse both traditional algorithms and AI models.

Only to a limited extent. While more data can help, it cannot fully compensate for inconsistent or physically flawed image acquisition. Improving the lighting setup is often far more effective than increasing dataset size.

Focus on consistent and physics-aware imaging. Use stable lighting, reduce unwanted reflections, and design your setup to handle natural variability in materials and surfaces. In many cases, combining multiple illumination strategies significantly improves reliability.

Your AI is only as good as your images.

If your inspection system struggles with false positives, unstable results, or poor generalization, the root cause is often the physical setup, not the algorithm.

We help you design vision systems that work reliably in real-world conditions, by aligning lighting, camera placement, and material behaviour from the start.

English (EN)

English (EN)  Deutsch (DE)

Deutsch (DE)