AI & image acquisition

The spatial distribution of features

In the first part of this series, we discussed the fundamental importance of image quality in AI-based machine vision. Today, we focus on another key aspect: how are the features to be inspected spatially distributed?

This aspect influences the design and development of a machine vision system in several ways and immediately raises the question of the available spatial integration options.

Integration into existing production environments

A machine vision system is almost never designed without constraints. In most cases, the optical inspection system must be integrated into an existing production line. Installation space, cycle time, mechanics and process flow are already defined.

And sometimes it becomes clear very quickly: physics cannot be negotiated.

If only 100 mm of installation space is available, while at the same time an inspection area of 250 × 250 mm must be captured at the required resolution, a single-camera solution will inevitably reach its limits. In such cases, alternative concepts are required instead of wishful thinking.

For the following considerations, let us assume that fundamental parameters such as focal length, minimum object distance (MOD) and depth of field are generally achievable. The focus then shifts to other questions.

Ratio of inspection area to feature size

How large is the inspection area? And how small are the relevant features in comparison?

From this relationship, several aspects arise:

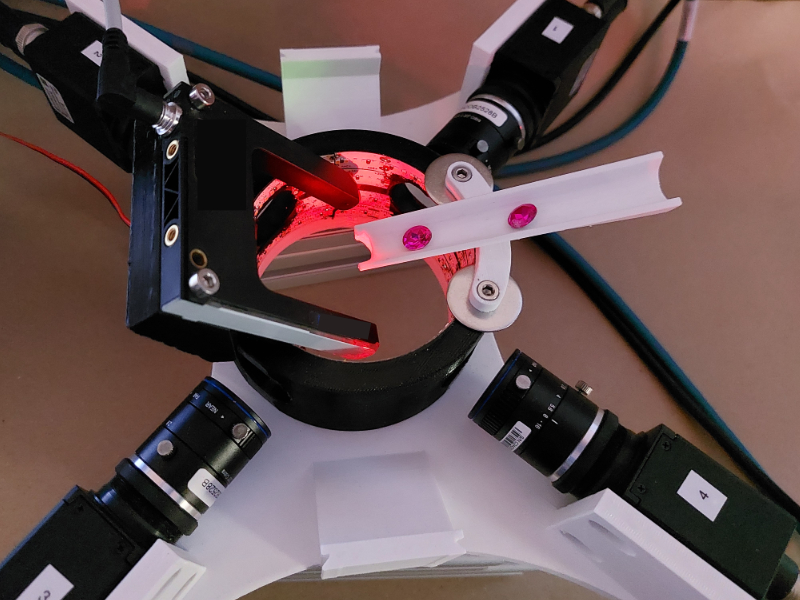

- Number of required cameras, lenses and lighting units

- Definition of potential overlap areas

- Calibration requirements

- Synchronization of image acquisition

The larger the field of view combined with high detail requirements, the more complex the overall system architecture becomes.

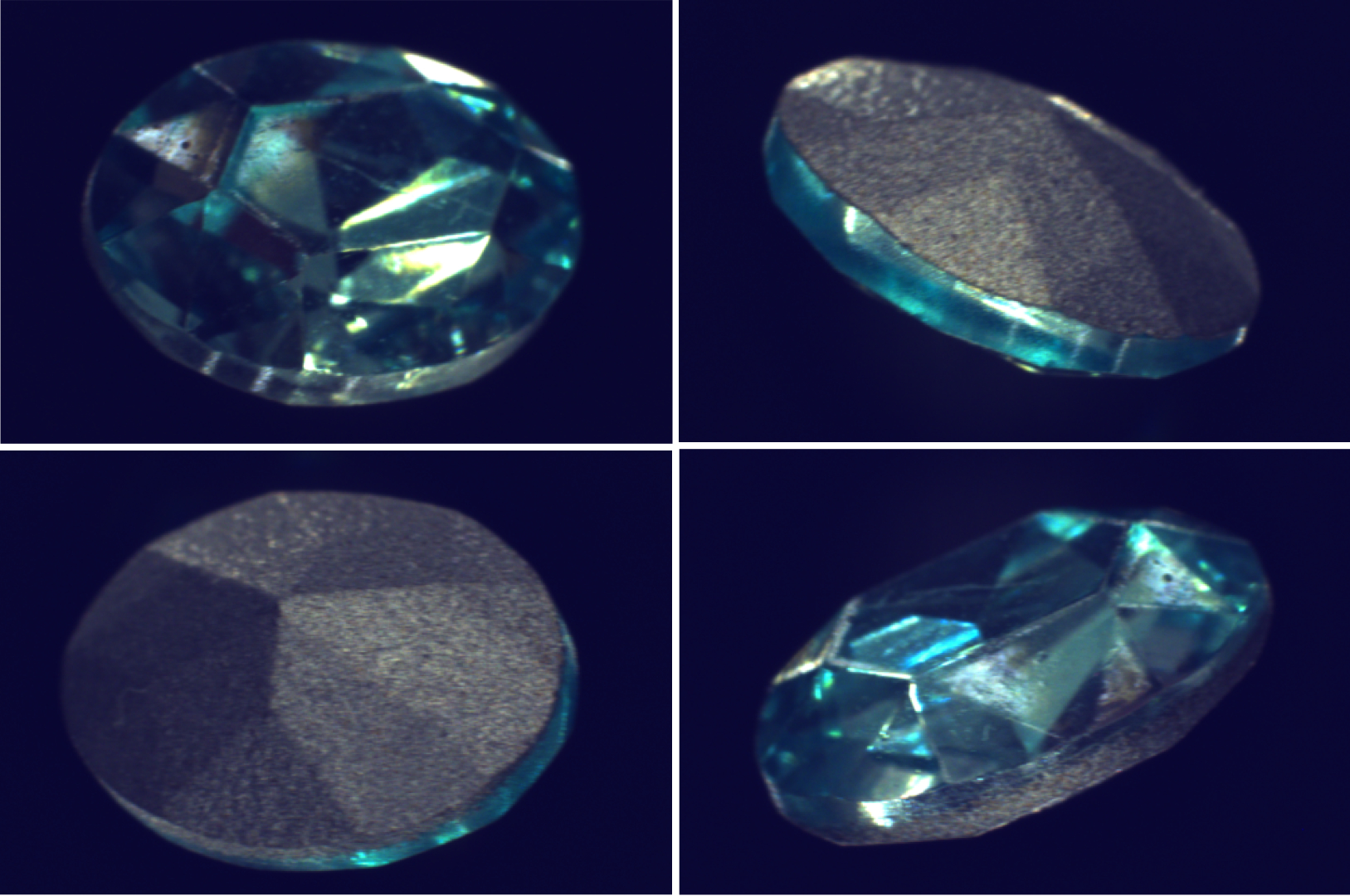

Surface geometry

Is the inspection area flat, curved or stepped?

Surface geometry directly affects the required depth of field and therefore the optical system design.

Possible approaches include:

- Increasing depth of field by reducing the aperture

- Applying focus stacking methods

- Using liquid lens systems

- Alternatively, performing a robot-guided scanning process with one or more line-scan or area-scan cameras

Not every solution is process-stable or economically viable. What matters is the interaction between mechanics, optics and cycle time.

Number of sides to be inspected

Does the object require inspection on one side or multiple sides?

This question directly determines:

- The total number of cameras, lenses and lighting units

- Whether object tracking or rotation mechanisms are required

Especially in multi-side inspections, it becomes clear how strongly the spatial distribution of features shapes the overall system architecture.

Influence on the system architecture

In this article, the focus was therefore on where and how relevant structures appear within the field of view.

Spatial distribution is not a secondary detail.

It is one of the fundamental design parameters in AI-based machine vision systems.

In the next part of the series, we will look at the optical properties of the features themselves.

Reflective or diffuse? Transparent, glossy or matte?

Because this is often where it is decided which illumination strategy truly works and whether the AI ultimately sees what we believe it sees.

Deutsch (DE)

Deutsch (DE)  English (EN)

English (EN)